From zero to 1 million doodle videos in the first year.

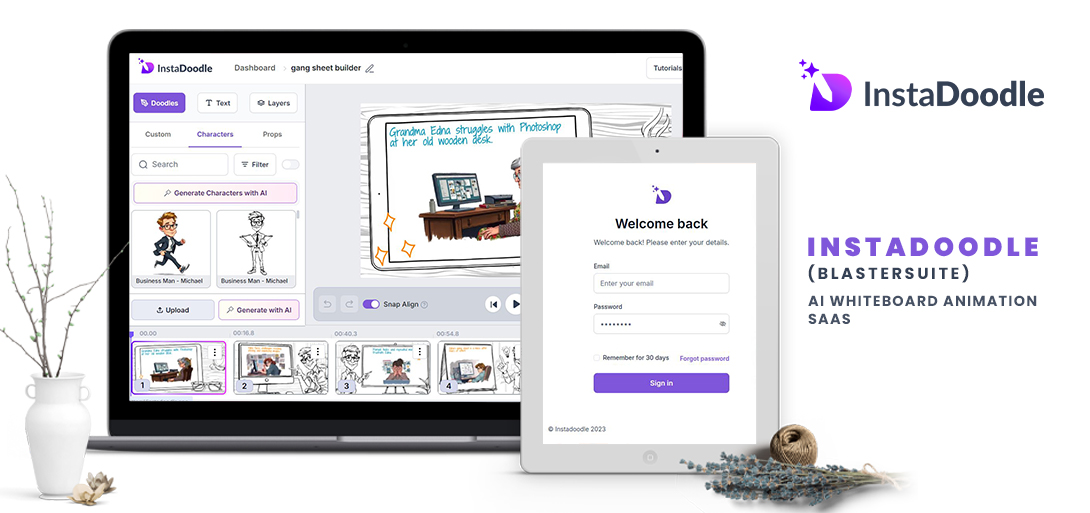

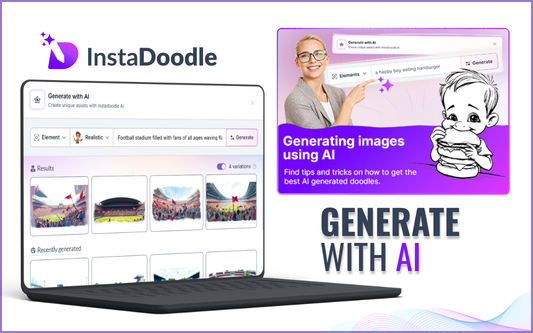

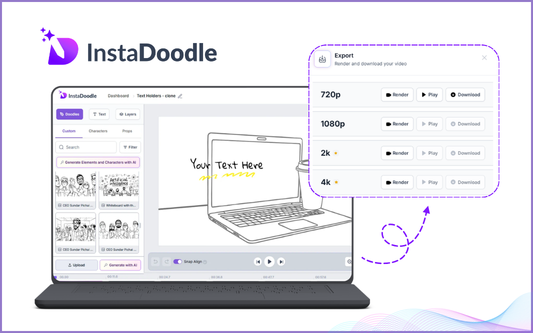

How we built InstaDoodle from scratch in 2025 — an AI-powered whiteboard animation platform that renders the same animation pixel-for-pixel in the browser and on the server. Here's what we built, the technical problems we solved, and the numbers that came out the other side.